ChatGPT for Customer Service: 5 Key Risks and How to Mitigate Them

AI customer service tools are everywhere, and more businesses are wondering whether ChatGPT is safe to handle real support queries — customer data, internal documents, order details.

There's no simple yes or no. This article breaks down the five main risks of using ChatGPT for enterprise support, with practical mitigation strategies for each.

How ChatGPT Handles Business Data#

Before getting into the risks, one foundational fact: when you use ChatGPT's consumer interface or call the API directly, the messages you send are transmitted to OpenAI's servers for processing.

OpenAI's current data handling policies break down as follows:

- API calls: data is not used for model training, but is processed by OpenAI's systems and retained for up to 30 days (by default) for safety monitoring

- ChatGPT web interface: OpenAI may use conversation data to improve its models, depending on whether the user has opted out

None of this means businesses should abandon AI. It means businesses need to know exactly what data they're sending.

Risk 1: Customer Data Privacy Exposure#

How It Manifests#

When a customer service chatbot routes user conversations through the ChatGPT API, the following may be transmitted to third-party servers:

- Customer names, contact details, and account information

- Order details, purchase history, and payment information

- Identity information or health-related data submitted in conversation

- Company information or complaint content entered directly in chat

Real Compliance Exposure#

Data protection regulations — GDPR (EU), CCPA (California), PDPA (Thailand), and others — impose joint liability on businesses for how they handle user data.

Routing user data to an external AI service without a signed DPA may constitute a compliance violation.

Mitigation#

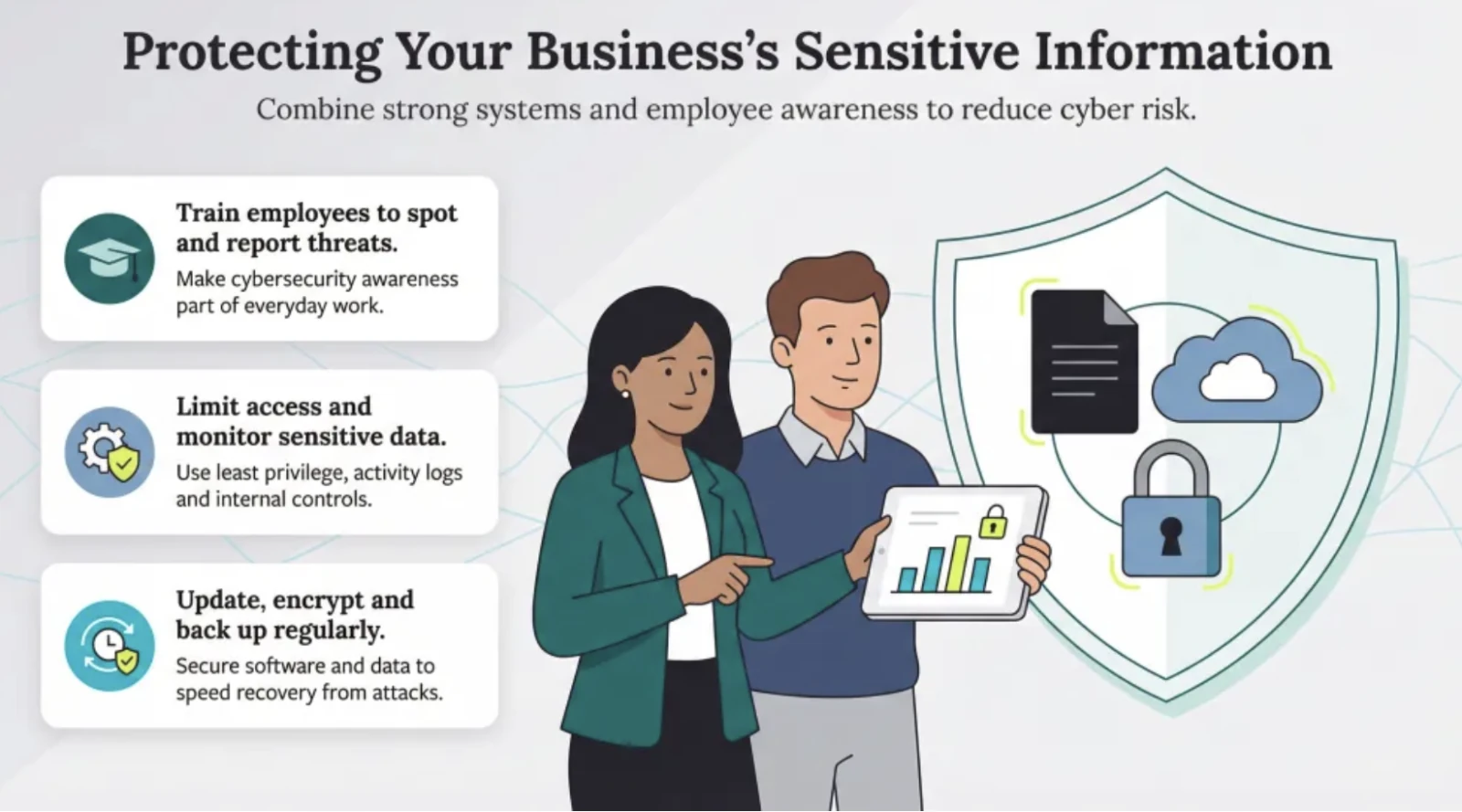

Establish a clear data flow policy that prevents your chatbot from sending customer PII to external AI services.

When evaluating enterprise platforms, choose vendors that can provide explicit data residency commitments and a signed DPA.

Risk 2: Internal Knowledge Leakage#

How It Manifests#

To get ChatGPT to answer questions about their own products, many teams paste internal documents directly into the prompt. This blurs the line between processing and information security:

- Unreleased product roadmaps or pricing strategies

- Service terms and commercial conditions from customer contracts

- Internal operating procedures, HR policies, or financial data

- Unpublished R&D results or go-to-market strategy

Real Business Impact#

Sending sensitive internal documents to an external AI service — even with low breach probability — means your organization has lost full control over that data.

For content that includes trade secrets, that loss of control is itself a risk.

Mitigation#

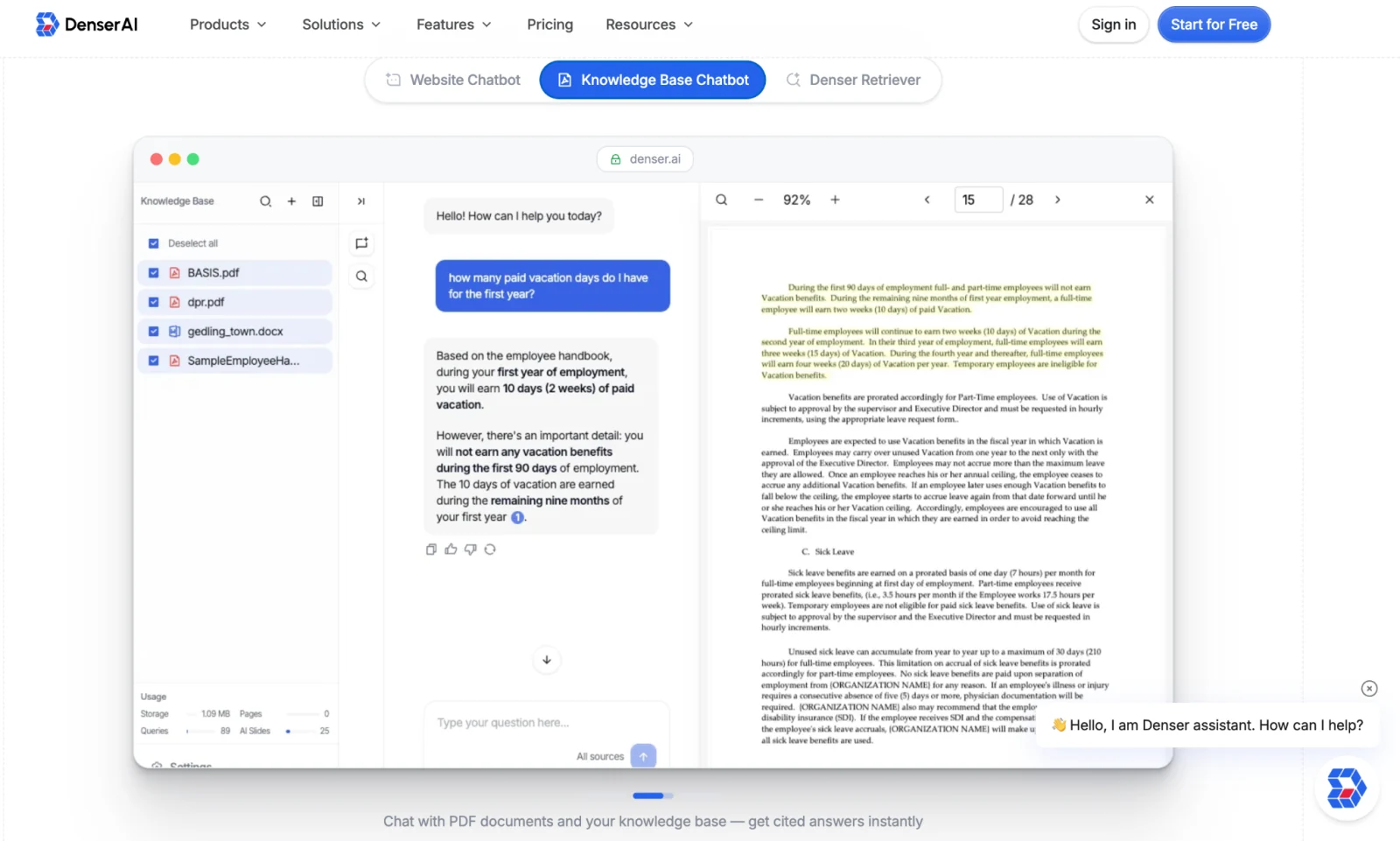

Use a closed knowledge base architecture that restricts the chatbot to authorized, public-facing content (help center, product pages) — not a firehose of internal documents.

Through a RAG architecture, the chatbot retrieves content locally and sends only the relevant passage alongside the user's question — not an entire document packed into the prompt.

Risk 3: Hallucinations and Inaccurate Answers#

How It Manifests#

Hallucination is a well-documented, systemic issue across all large language models — when uncertain, the model generates responses that sound plausible but aren't actually correct.

In an enterprise support context, this leads to:

- Incorrect quotes: a chatbot cites a price that doesn't match actual pricing

- Policy misunderstandings: an inaccurate answer causes a customer to misunderstand your refund or warranty policy

- Escalating complaints: a customer acts on a wrong AI answer, finds the reality doesn't match, and becomes more frustrated than before

- Legal exposure: incorrect advice leads to financial loss, and the business faces liability claims

Mitigation#

Adopt a RAG architecture that ties chatbot responses to vetted content sources, with source citations users can click to verify.

When every answer is grounded in a document, hallucination drops significantly — and users can validate the answer themselves. Denser AI packages RAG and source citations natively into its platform, with the Denser Retriever handling retrieval precision underneath.

Risk 4: Regulatory and Industry Compliance Risks#

How It Manifests#

Beyond data privacy, businesses face compliance pressure on three additional fronts:

- Industry-specific regulations: HIPAA (healthcare) prohibits medical information from entering unauthorized third-party systems; PCI-DSS (payments) prohibits processing cardholder data through unencrypted external services

- Algorithmic accountability regulations: if an AI system materially influences business decisions affecting customers, regulations may require those decisions to be explainable

- Child data protection: handling data from minors triggers additional protections under COPPA and similar regulations

Mitigation#

Build an internal review process to assess data flow and regulatory fit before deploying any AI tool.

Choose platforms with relevant certifications (SOC 2 Type II, ISO 27001), and sign a data processing agreement before going live. Denser AI's pricing page lays out the platform's compliance and security details.

Risk 5: No Conversation Audit Trail#

How It Manifests#

Business operations will always surface moments like this: a customer says "the chatbot told me X" — but the conversation log is incomplete, and you can't reconstruct the situation. Raw API integrations require custom logging, which means:

- No record of what the chatbot said — no way to diagnose what went wrong

- No visibility into which question types have the lowest accuracy

- When a complaint comes in, no complete conversation record to use as evidence

Mitigation#

Choose a platform with built-in conversation logging so every interaction is captured in full.

Regular log reviews serve both compliance and ongoing accuracy improvement — a customer service chatbot with native logging makes this significantly easier to operationalize.

A 7-Step Mitigation Framework for AI Support Risks#

With a clear picture of the five risk categories, here's a systematic framework for managing them — without giving up the efficiency benefits AI delivers.

| Mitigation | What to Do | Risk(s) Addressed |

|---|---|---|

| ① Scope your knowledge source | Limit the chatbot to vetted, public-facing content (help center, product pages). Explicitly exclude sensitive internal documents. | Internal leakage, hallucination |

| ② Use RAG to ground responses | Use a RAG architecture so every response is backed by a document, not the model's unconstrained generation. | Hallucination and inaccuracy |

| ③ Enable source citations | Attach a source document and passage to every response; users can click to verify. | Non-auditability, user distrust |

| ④ Configure access controls | Restrict which content the chatbot can access; set content permissions by user group. | Privacy exposure, internal leakage |

| ⑤ Set up human handoff | For sensitive or liability questions, configure auto-escalation triggers to a human agent. | Compliance risk, high-stakes scenarios |

| ⑥ Build conversation log audits | Log all conversations in full; regularly review low-quality responses and negative feedback. | Auditability, continuous improvement |

| ⑦ Keep the knowledge base current | Establish a content review cadence to keep the chatbot's knowledge base up to date. | Stale information, hallucination risk |

How Denser AI Addresses These Customer Service Risks#

Turning the mitigation framework into reality requires platform-level support. Denser AI's product architecture maps directly to each of the five risk dimensions:

| Risk dimension | Denser AI capability |

|---|---|

| Customer privacy risk | Chatbot answers from your knowledge base — no customer PII passed to a general-purpose AI model |

| Internal knowledge leakage | Knowledge base content permissions are configurable; only the retrieved passage is sent to the AI, never a full document |

| Hallucination and inaccuracy | RAG architecture binds responses to document content; Source Citations tag every answer with its source |

| Auditability gaps | Built-in conversation logging, user feedback collection, and answer quality analytics dashboard |

Here's how Denser AI's content management capabilities work in practice:

Website / PDF / knowledge base training: you specify exactly which pages and documents the chatbot can access. The platform answers only from authorized content.

RAG retrieval: when a user asks a question, the system retrieves the most relevant passages from your knowledge base and passes only those to the AI — minimizing sensitive content exposure.

Source Citations: every response is automatically tagged with the source document and passage; users can click to verify, directly addressing distrust of black-box answers.

Conclusion#

Whether ChatGPT is safe for business customer service depends on how you use it. Routing sensitive data through a general-purpose interface creates real risks; deploying the right architecture brings those risks to an acceptable level.

Six principles cover the essentials: scope your knowledge source, ground answers with RAG, enable source citations, configure access controls, set up human handoff, and build conversation log audits.

Denser AI embeds all six directly into the platform — so support teams capture AI efficiency without separately engineering security and compliance.

FAQ About Using ChatGPT Safely for Customer Service#

Is ChatGPT GDPR compliant?#

ChatGPT itself doesn't make your deployment GDPR-compliant — that depends on how you handle customer data, whether you have a DPA in place, and how data flows through your system.

Can ChatGPT keep customer data private?#

API data isn't used for training but is retained up to 30 days for safety monitoring. For stricter privacy, use a platform that keeps customer queries within a closed knowledge base architecture.

How do I stop ChatGPT from giving wrong answers?#

Ground responses in your own documents using RAG, and enable source citations so every answer is traceable. Denser AI handles both natively.

Is ChatGPT safe for healthcare or financial services?#

Not by default — HIPAA and PCI-DSS prohibit sending regulated data to external systems without proper agreements. Use a platform with the right certifications and data controls.

What's the safest way to use ChatGPT for customer service?#

Limit the knowledge source to vetted public content, ground answers with RAG, enable source citations, and use a platform with built-in conversation logging.