What is Retrieval-Augmented Generation (RAG)? 2026 Guide

Most AI chatbots make things up. They hallucinate dates, invent product features, and confidently quote policies that do not exist. The fix is not a smarter model. It is giving the model your actual data to read before it answers.

That is what Retrieval-Augmented Generation (RAG) does. Instead of relying only on what a language model learned during training, a RAG system retrieves relevant content from your documents, websites, or databases and uses that context to generate a grounded answer. Every response can cite the source it pulled from, so users (and your team) can verify it.

This guide walks through how RAG works, the tools and frameworks you can build with, where RAG commonly fails, and how teams use it across customer support, legal, healthcare, and e-commerce.

TL;DR#

- RAG (Retrieval-Augmented Generation) combines a retrieval step with a generative language model so AI answers are grounded in your real data, not just its training set.

- Why it matters: It cuts hallucinations, keeps answers current as your content changes, and adds source citations so users can verify every response.

- How it works: Index your data into a vector database, retrieve the most relevant chunks for each query, and pass them to an LLM as context for the answer.

- RAG vs fine-tuning: RAG updates instantly when content changes; fine-tuning requires retraining and is far more expensive.

- Best for: Customer support, internal knowledge search, legal and compliance review, e-commerce discovery, and any case where accuracy and source attribution matter.

- Try it: Denser AI is a no-code RAG platform you can deploy in minutes; Denser Retriever is the same engine exposed as a developer API.

What is Retrieval-Augmented Generation?#

Retrieval-Augmented Generation is an AI technique that improves the accuracy of large language models (LLMs) by giving them access to external data at query time. Instead of generating responses only from what the model learned during training, a RAG system first retrieves the most relevant passages from your knowledge base, then asks the LLM to answer using that retrieved context.

The result is a response that is grounded in your actual content, not the model's general world knowledge. That solves three of the biggest problems with vanilla LLMs: outdated information, hallucinations, and the inability to answer questions about your specific business. The original RAG approach was introduced by Patrick Lewis and colleagues in a 2020 Meta research paper, and it has become the standard architecture for production AI chatbots, search, and knowledge retrieval. For a deeper comparison of embedding model choices, see our breakdown of open-source vs paid embedding models.

Key Components of a RAG System#

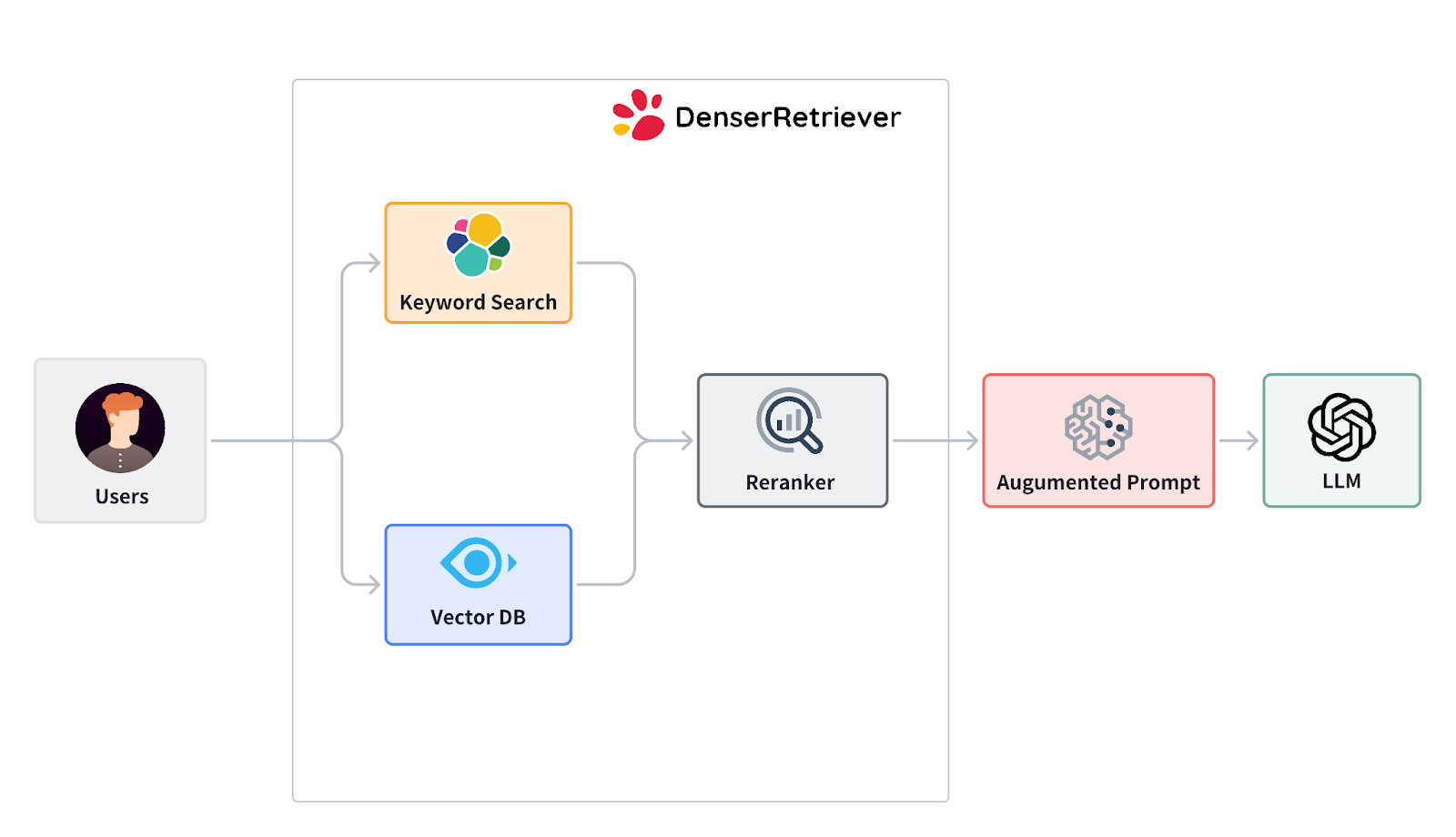

A RAG system has two core components working together: an Information Retrieval (IR) layer and a Natural Language Generation (NLG) layer.

Information Retrieval#

The IR layer searches your data and surfaces the passages most relevant to the user's question. Production systems typically combine three approaches:

- Keyword search for exact matches (product codes, SKUs, policy names)

- Semantic vector search for meaning-based matches (synonyms, paraphrases, intent)

- ML reranking to refine the top results before they reach the language model

This three-layer approach is what makes AI-native search engines more accurate than pure vector search. For teams comparing search platforms, our guide to Elasticsearch alternatives covers the strongest options.

Natural Language Generation#

The NLG layer is the language model (GPT-4o, Claude, Gemini, or open-source alternatives) that reads the retrieved context and writes the final answer. Better LLMs help, but the quality of the retrieval layer matters more. A weak retriever paired with GPT-4 still produces bad answers. A strong retriever paired with a smaller model often outperforms it.

The two layers together let you train an AI assistant on your own data without retraining the underlying model.

Why Use RAG?#

Vanilla LLMs have three problems that RAG fixes.

Outdated training data. A model frozen at its training cutoff cannot tell you about your latest pricing, policies, or product updates. RAG retrieves from your live content, so answers stay current.

Hallucinations. Without grounding, an LLM will invent plausible-sounding details. RAG constrains the model to your actual documents, so it generates answers from real source material instead of guessing.

No knowledge of your business. A general-purpose LLM does not know your return policy, your product catalog, or your internal runbooks. RAG gives it that knowledge without expensive retraining.

For business teams, that translates to chatbots that resolve 60-80% of routine queries, support deflection that scales without adding headcount, and answers that include source links so users can verify what the AI tells them.

Build your own RAG chatbot in minutes with Denser AI. Free plan, no credit card required.

How Does RAG Work?#

The mechanics behind a RAG system are simple in concept. Here is the standard pipeline, step by step.

Step 1: Ingest and Parse Your Data#

Documents come in many formats: PDFs, Word, websites, Slack threads, database rows. The first step is parsing each source into clean text, preserving structure where it matters (headings, tables, code blocks). Modern systems handle JavaScript-heavy websites, scanned documents (via OCR), and structured data from databases.

For website content specifically, an AI web crawler handles parsing, JavaScript rendering, and Markdown conversion in one step.

Step 2: Chunk the Content#

Long documents need to be split into smaller passages so the retrieval layer can find specific information. Chunk sizes typically range from 200 to 1,000 tokens, with some overlap between chunks to preserve context across boundaries. Get this wrong and the retrieval quality drops sharply, even with a great vector database.

Step 3: Embed and Index#

Each chunk is converted into a vector embedding (a numerical representation of meaning) and stored in a vector database. Common choices include Pinecone, Weaviate, Chroma, Qdrant, and Elasticsearch. The embedding model used here matters: better embeddings cluster related ideas closer together in vector space, which means more accurate retrieval.

Step 4: Retrieve Relevant Context#

When a user submits a query, the system embeds the query, searches the vector database for the most semantically similar chunks, and (in production systems) applies a reranker to refine the top results. This is where the three-layer search architecture pays off: keyword matching catches exact terms the vector search might miss, and the reranker filters out passages that look similar but are not actually relevant.

Step 5: Generate the Answer#

The retrieved passages are added to the language model's prompt as context. The model then generates a response based on that retrieved content, ideally including citations that link back to the original source. Source citations are what make a RAG system trustworthy in production.

Step 6: Update the Knowledge Base#

Your data changes. A good RAG system re-crawls websites on a schedule, re-indexes updated documents, and lets you delete stale content. Without this, your chatbot gives confidently wrong answers from outdated material.

RAG vs Fine-Tuning vs Prompt Engineering#

There are three ways to make a language model work for your use case. Each has its place.

| Approach | What It Does | Best For | Cost |

|---|---|---|---|

| Prompt Engineering | Crafts specific instructions to guide model output | Simple tasks, general topics | Lowest |

| Fine-Tuning | Adjusts model weights on your training data | Style and behavior changes | Highest |

| RAG | Retrieves your data at query time, feeds to LLM as context | Knowledge-grounded answers, accuracy, freshness | Mid |

Prompt Engineering#

Prompt engineering shapes the model's output by writing better instructions. It is fast and free, but it cannot give the model knowledge it does not already have. Best for general topics and quick prototypes.

Fine-Tuning#

Fine-tuning modifies the model itself by training it on additional data. This is useful for changing the model's writing style, tone, or specific behaviors. It is expensive, time-consuming, and requires ML expertise. Most importantly, fine-tuning does not solve the freshness problem: every time your content changes, you have to retrain.

RAG#

RAG combines the strengths of both. You get the customization of fine-tuning (the AI knows your business) without the retraining overhead. When your content changes, the next answer reflects it automatically. For 90% of business AI chatbot use cases, RAG is the right starting point. For the few cases where you need to change the model's voice or specialized reasoning, fine-tuning can layer on top.

RAG Tools and Frameworks#

The RAG ecosystem in 2026 has matured into clear categories. Here are the main options across each layer of the stack.

Frameworks for Building RAG#

- LangChain: The most popular orchestration framework. Strong agent and multi-step workflow support. Steeper learning curve.

- LlamaIndex: Retrieval-first framework with strong document parsing and indexing. Easier for pure RAG use cases.

- Haystack: Production-focused framework with stronger pipeline composition. Popular in enterprise.

Vector Databases#

- Pinecone: Managed, production-ready, high latency performance. Closed-source.

- Weaviate: Open-source with strong hybrid search. Self-hosted or managed.

- Chroma: Lightweight and developer-friendly. Best for prototypes and small projects.

- Qdrant: Open-source with strong filtering and metadata support.

- Elasticsearch: Battle-tested keyword search with vector capabilities added.

Managed RAG Platforms#

If you do not want to build the stack yourself, managed platforms collapse the pipeline into a single product:

- Denser AI: No-code platform that crawls your website (up to 100K+ pages), indexes documents, and ships a deployable chatbot with source citations. The same engine is available as a developer API through Denser Retriever.

- Enterprise RAG solutions: Purpose-built for large organizations with security, compliance, and scale needs.

For most business teams, a managed platform reaches production in minutes instead of the weeks-to-months a custom build takes. For developers with specific infrastructure requirements, frameworks like LangChain plus a vector database like Pinecone offer maximum flexibility.

Common RAG Failures and How to Fix Them#

Most failed RAG projects share the same root causes. Knowing them upfront saves weeks of rework.

Lost-in-the-middle retrieval. When you stuff too many retrieved chunks into the prompt, language models tend to ignore information in the middle. The fix is better reranking and tighter top-k limits (typically 3-5 chunks, not 20).

Bad chunking. Chunks that are too small lose context. Chunks that are too large dilute the relevant passage. Test chunk sizes between 200 and 1,000 tokens with 10-20% overlap and pick what scores best for your content type.

Stale knowledge base. A RAG system is only as fresh as its source data. If you index once and never re-crawl, your chatbot will give wrong answers as your content evolves. Schedule regular re-indexing (weekly or monthly depending on how often your content changes).

Weak retrieval, strong LLM. Teams often spend on the latest GPT-4o or Claude model and pair it with default vector search. The LLM is not the bottleneck. Invest in retrieval quality (hybrid search, reranking) before upgrading the model.

Hallucination from missing context. When the retrieval layer fails to find anything relevant, some LLMs invent an answer anyway. Configure the system to say "I don't know" when retrieval scores are below a threshold. This is non-negotiable for legal, healthcare, and financial use cases.

No source citations. If your RAG system generates answers without citations, users cannot verify them. For business adoption, citations should be the default, not a feature.

Evaluating RAG Quality#

You cannot improve what you do not measure. RAG evaluation has matured into a small set of standard metrics.

Faithfulness. Did the answer stay grounded in the retrieved context, or did the LLM invent details not present in the source? Faithfulness scoring catches hallucinations even when the retrieved context was correct.

Context precision. Of the chunks the retriever returned, how many were actually relevant? Low precision means your retriever is bringing back noise.

Context recall. Did the retriever surface all the relevant chunks needed to answer the question? Low recall means relevant content exists but is not being retrieved.

Answer relevance. Does the generated answer actually address what the user asked?

Open-source frameworks like RAGAS and DeepEval automate scoring across these dimensions. For production deployments, also track business metrics: support ticket deflection rate, average resolution time, conversation-to-conversion rate, and customer satisfaction scores.

What's Next: Agentic and Multimodal RAG#

RAG is evolving fast. Two trends are reshaping how teams use it.

Agentic RAG. Instead of a single retrieve-then-generate pass, agentic systems plan multi-step workflows: search the knowledge base, query a database for live data, call an API, then synthesize the result. The chatbot moves from "answers questions" to "completes tasks". For example, a customer asks "where is my order and can I change the shipping address?" An agentic RAG system retrieves the order policy from documents, queries the order database for the shipment status, and calls the shipping API to update the address, all in one conversation.

Multimodal RAG. Early RAG systems only handled text. Newer systems index images, charts, audio transcripts, and video frames alongside text, then retrieve across all modalities. A user can show a product photo and ask "do you have this in another size?" and the chatbot finds the right SKU.

These are not science-fiction roadmap items. Both are in production today on platforms like Denser AI and the open-source ecosystem.

Industry Use Cases for RAG#

RAG has moved from research to widespread adoption. Here is where it produces the most value today.

Customer support. The most common use case. RAG chatbots trained on help docs, policy pages, and product manuals deflect 60-80% of routine tickets while citing source pages so customers can verify. See our guide to building an AI customer service chatbot.

Legal and compliance. Lawyers query contract clauses, regulations, and case law in natural language. Source citations are mandatory: every answer must trace to a specific paragraph. Visual highlighting in the original document (which Denser AI provides) is what makes this workable for legal teams.

Healthcare. Clinicians and patient support teams retrieve from medical guidelines, drug databases, and treatment protocols. HIPAA compliance and on-premise or private-cloud deployment options are required. Verified source attribution is non-negotiable.

Financial services. Compliance teams use RAG to find specific clauses in regulatory filings. Customer support teams answer policy questions with traceable sources. Audit trails and role-based access matter as much as accuracy.

E-commerce and retail. Product discovery in natural language ("comfortable shoes for long walks") returns the right SKU even when the wording does not match the product title. Combined with live database queries, RAG chatbots also handle order status, inventory checks, and recommendations.

Internal knowledge management. Employees search wikis, runbooks, HR docs, and engineering documentation in plain language instead of guessing the right keyword. Strong internal knowledge bases deflect repetitive Slack questions and cut onboarding time for new hires.

Denser Retriever: Open-Source RAG Infrastructure#

The Denser Retriever project is the open-source retrieval engine behind Denser AI. It combines keyword search (Elasticsearch), semantic vector search, and gradient-boosted ML reranking (xgboost) into a single platform.

On the MSMARCO benchmark, Denser Retriever achieved a relative NDCG@10 gain of 13.07% over the strongest vector search baseline, demonstrating that hybrid retrieval with ML reranking outperforms pure vector approaches.

For developers building custom RAG pipelines, Denser Retriever is available as:

- REST API: Hosted retrieval with no infrastructure to manage. See the Denser Retriever platform.

- Open-source: Full source on the Denser Retriever GitHub repository. Self-host with full control.

- Documentation: Denser Retriever docs for setup and integration.

For teams that want a complete RAG chatbot without writing code, Denser AI wraps the same engine in a no-code platform with deployment to website, Slack, Shopify, WordPress, and more.

Get Started With RAG#

The fastest way to see what RAG can do for your business is to try it on your own content.

- No-code path: Sign up for Denser AI free, paste your website URL, and have a working chatbot in minutes.

- Developer path: Try the Denser Retriever API or self-host the open-source engine.

- Enterprise path: Book a demo to discuss RAG deployment at scale, including on-premise, private cloud, and compliance options.

For a deeper look at specific RAG applications, see our guides on RAG chatbots, chat with PDF, and enterprise RAG.

FAQs About Retrieval-Augmented Generation#

What is the difference between RAG and generative AI?#

Generative AI refers broadly to AI models that produce content (text, images, code) from training data. RAG is a specific architecture that adds a retrieval step before generation, so the AI grounds its responses in external data instead of generating purely from its training set. Most production AI chatbots in 2026 are RAG systems.

Is RAG better than fine-tuning?#

For most business use cases, yes. RAG is faster to deploy, cheaper to maintain, and stays current as your content changes. Fine-tuning is better when you need to change the model's writing style or specialized reasoning patterns. Many production systems combine both: RAG for knowledge, fine-tuning for behavior.

What role does semantic search play in RAG?#

Semantic search is the retrieval engine inside most RAG systems. Instead of matching exact keywords, semantic search uses vector embeddings to find passages with similar meaning. This is what lets a RAG chatbot match "how do I get a refund" with content titled "return policy" even though no keywords overlap.

Do I need a vector database to build RAG?#

You need some way to store and search embeddings. Production systems use vector databases like Pinecone, Weaviate, or Qdrant. Lightweight systems can use libraries like FAISS or even SQLite with a vector extension. Managed platforms like Denser AI handle this for you.

Can RAG work with images and other non-text data?#

Yes. Multimodal RAG indexes images, charts, audio transcripts, and video alongside text, then retrieves across all modalities. This is becoming the default in 2026 for product discovery, technical documentation, and any use case where visuals matter.

How accurate is a RAG system?#

Accuracy depends on three things: source data quality, retrieval quality, and the LLM. Well-tuned RAG systems with hybrid retrieval and reranking typically resolve 60-80% of customer queries correctly without human intervention. Source citations let users verify every answer, which raises trust even further.

How long does it take to deploy RAG?#

With a managed platform like Denser AI, minutes to a few hours. With a custom build using LangChain plus a vector database plus an LLM, typically weeks. Enterprise deployments with compliance reviews and integrations can take 4-12 weeks regardless of platform choice.